There's this assumption that if you use AI to help you code, you're vibe coding. That you're just typing "build me an app" into ChatGPT and shipping whatever comes back. And honestly, I get why people think that, because a lot of people are doing that, and the results speak for themselves. AI slop is real.

But that hasn't been my experience. Not even close.

We've Been Here Before

Remember when Wikipedia first became a thing? The immediate reaction from teachers, professors, anyone in academia was: don't use Wikipedia. It's unreliable. It's lazy. If you cite Wikipedia, you're not doing real research.

And sure, if all you did was copy and paste from Wikipedia, that was lazy. But that was never the point of Wikipedia. Wikipedia gave you a curated starting point. It had references at the bottom that you could follow to go deeper. It was a way to orient yourself in a topic before you did the real work.

The people who dismissed Wikipedia entirely missed that. The tool wasn't the problem. Passive use of the tool was the problem.

I think we're in the exact same moment with AI right now.

What I Actually Do with AI

In mid-2025, Andrej Karpathy, the guy who led AI at Tesla and worked at OpenAI, endorsed a term that finally captures what skilled AI usage looks like: context engineering. Shopify's CEO, Tobi Lutke, put it this way: it's the art of providing all the context for the task to be plausibly solvable by the LLM.

The analogy I like: the AI is a CPU, the context window is RAM, and you are the operating system deciding what gets loaded into memory.

That's not vibe coding. That's engineering.

When I work with AI, I'm not typing a question and hoping for the best. I'm feeding it source code, architecture docs, type definitions, domain constraints. I'm curating what it sees so that what it produces is actually useful. And when it's not useful, I don't just accept it. I dig into why, I adjust the context, and I run it again.

That takes skill. It takes understanding your own system. It takes being an active participant, not a passive consumer.

How I Got Here

Let me back up, because I think my story makes the point better than any definition can.

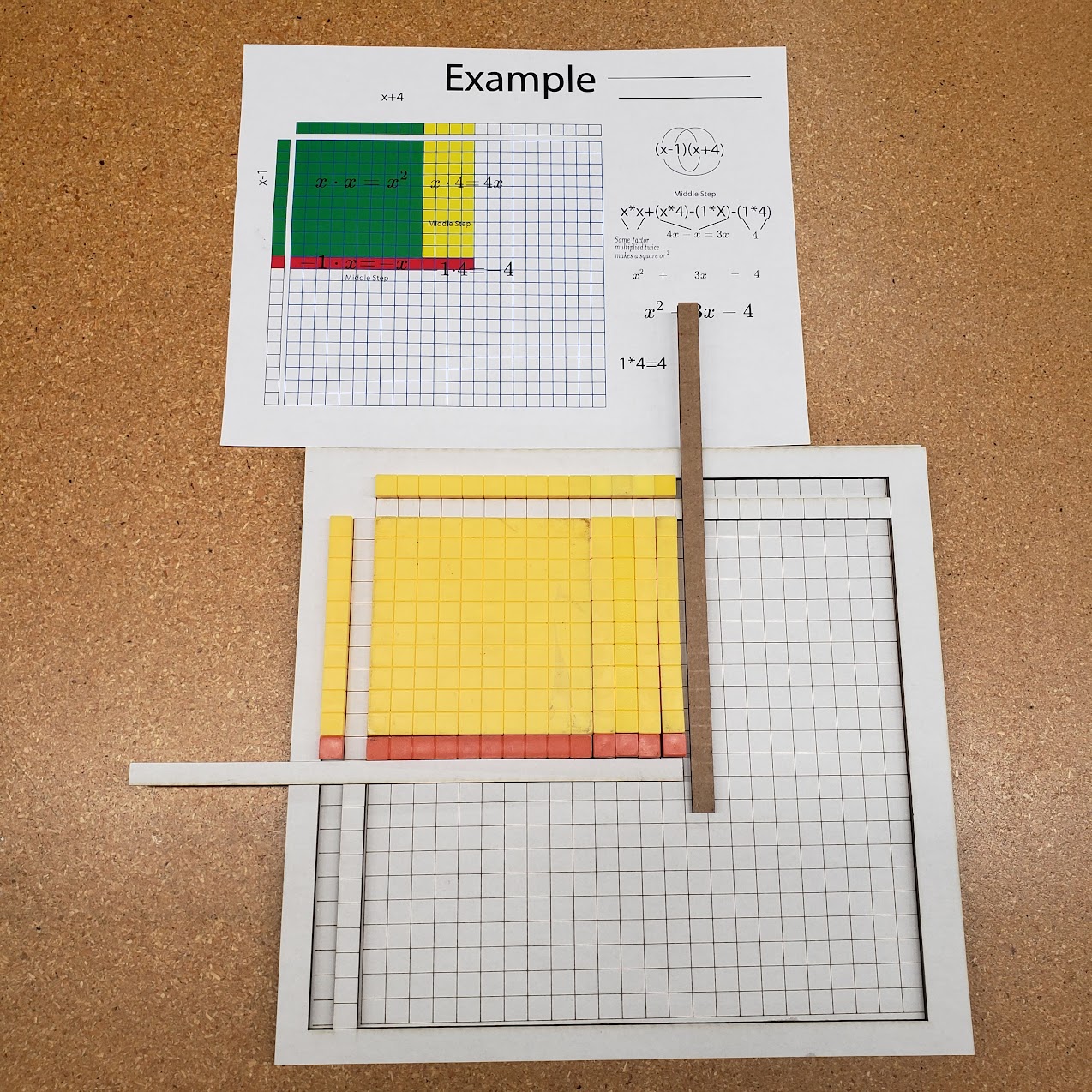

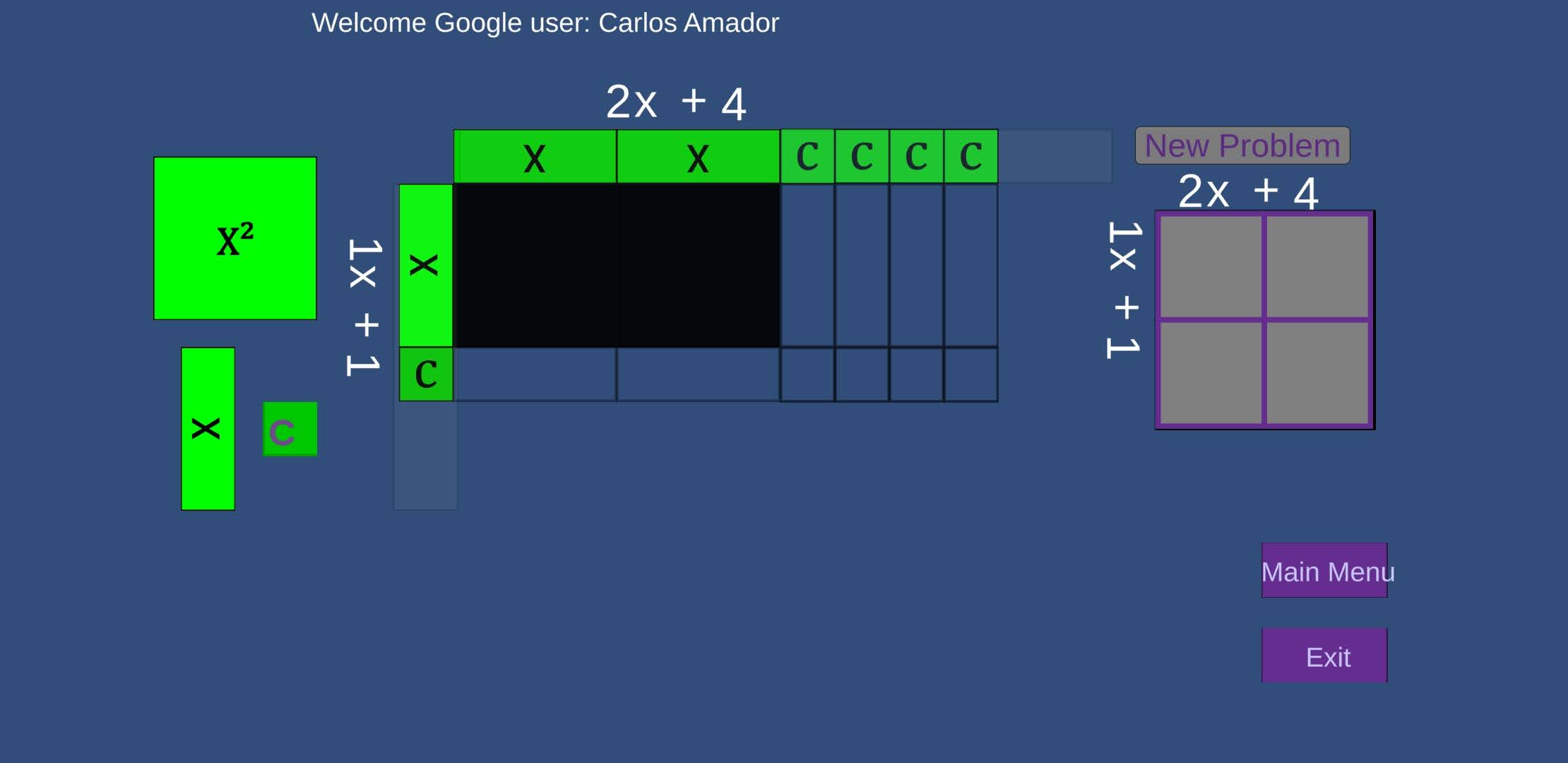

In November of 2020, two full years before ChatGPT was available to anyone, I started learning how to code in Unity. I'm a math teacher. I'd been creating physical manipulative boards for my students, and I wanted to digitize them.

By April of 2021, I'd built an Android-based algebra tiles manipulative with Firebase integration. Self-taught. No AI. Just me, the docs, and a lot of trial and error.

When ChatGPT showed up in January 2023, I decided to pivot to web applications. I went with Blazor since I already knew C# from Unity. And that's when I hit the wall of everything I didn't know I didn't know. Dependency injection. CI/CD pipelines. How to actually set up development and production environments. All the infrastructure stuff that nobody warns you about.

AI helped me learn those things. But I was never passive about it. I didn't copy and paste answers. I needed to understand. When something didn't work, I'd go into the actual source code of whatever library I was using, paste it into the conversation, and say "look at this, what's actually happening here?" And you know what? The answers got way better. I was doing context engineering before the term existed. I just didn't have a name for it.

The Old Code That Changed Everything

Here's the part of the story that really drives it home.

Over those early years, I'd built several small apps in Unity. Some of them were, honestly, kind of bad. I couldn't keep up with them because my programming skills weren't there yet. But here's what those apps did have: my domain knowledge. The logic of how math manipulatives should work. The relationships between tiles. The pedagogical flow. Years of teaching experience encoded in imperfect code.

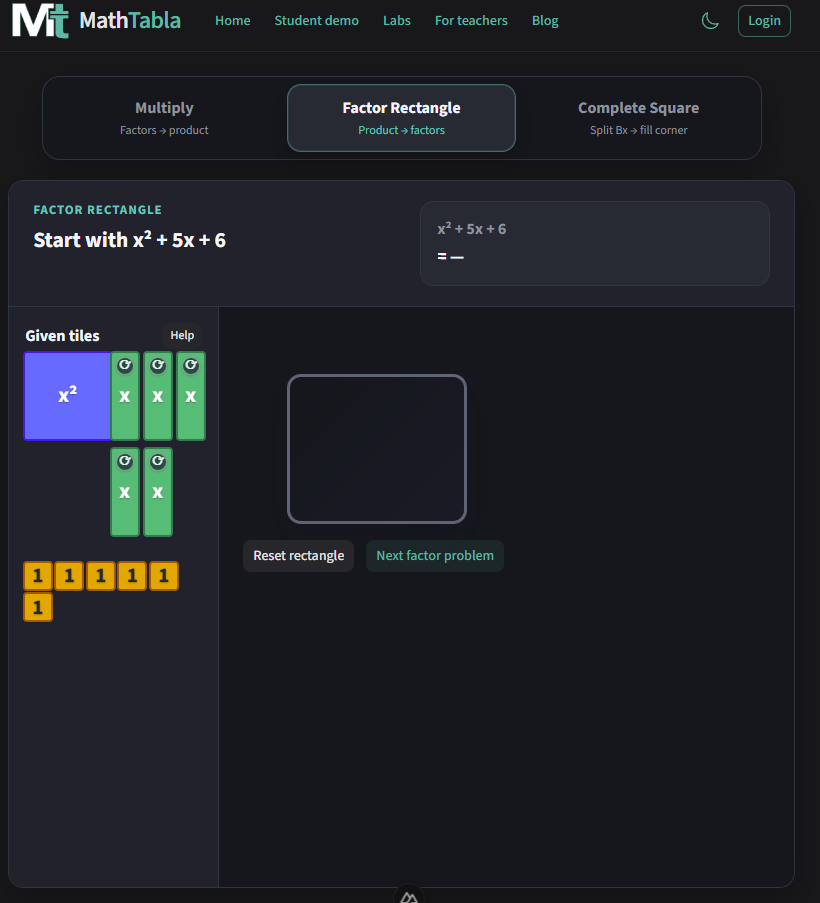

So I tried something. I took the code from one of those old Unity apps and fed it to the AI. Not as a "fix this for me," but as raw context. Here's what this application does. Here's how it works. Port it to web.

In one day, I didn't just recreate the application. I added two features I'd never been able to build on my own.

The old code wasn't good code. It was good context.

You can try the result yourself at mathtabla.com/labs/algebra-tiles.

This Is Bigger Than Code

I'm sharing my story, but this isn't really about me. And it's not just about programming.

Think about how many people are sitting on domain expertise right now, encoded in half-finished projects, rough prototypes, spreadsheets, lesson plans, diagrams. Things they built but couldn't take further because they hit a technical ceiling. Teachers with curriculum ideas they can't implement. Designers with prototypes stuck in Figma. Scientists with analysis pipelines held together by duct tape. Small business owners with workflows trapped in Excel.

All of them have context. None of them are "programmers." And that's the point.

Context engineering isn't about being a better prompt writer. It's about finally having a way to bridge the gap between what you know and what you can build. The domain knowledge was always the hard part. The technical execution is what's getting cheaper.

The Real Question

The conversation around AI right now is stuck on "is it good or bad?" That's the wrong question. The right question is: are you an active participant or a passive consumer?

If you just type "build me an app" and ship whatever comes back, yeah, that's vibe coding, and the results will reflect it.

But if you bring your expertise, curate the context, stay in the loop, and actually learn from every iteration, that's something else entirely. That's context engineering. And the amount of things you can learn, how fast you can learn them, how long you can stay in that learning loop, it all increases.

AI doesn't replace your skill. It amplifies it. And what it amplifies depends entirely on what you feed it.